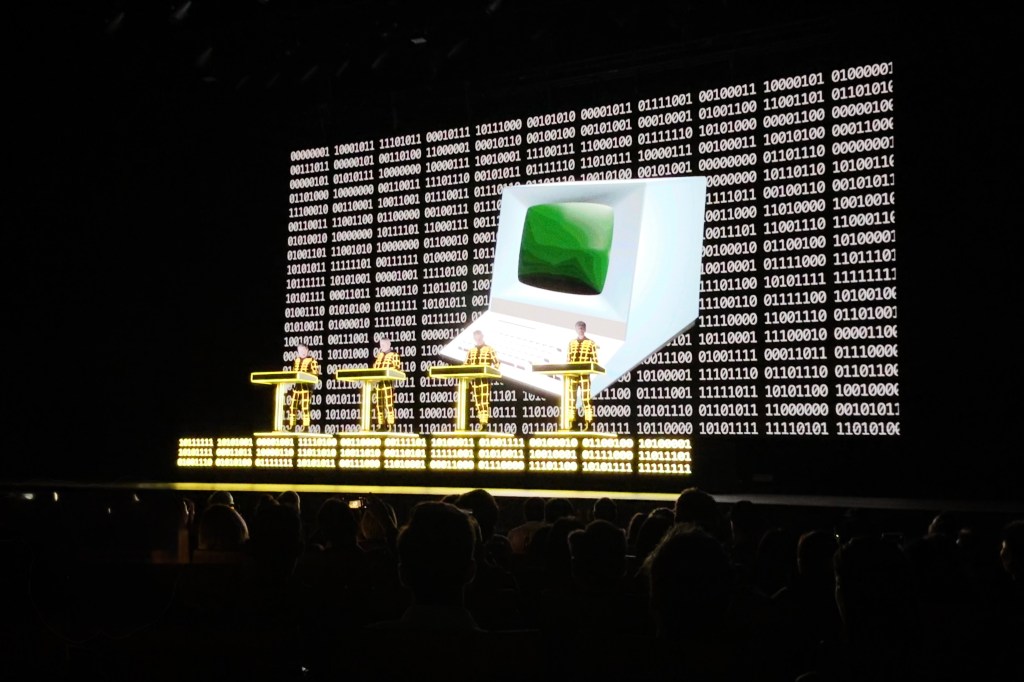

Brian was kind enough to think of me when he had an extra ticket to see Kraftwerk at their one-night-only local show on Friday. I was not even aware they were still alive, let alone touring. Turns out it’s just one co-founder left holding the project together, Ralf Hütter. After some drama with the tickets — for a moment it seemed like we might not get in — we were treated to an hour and a half of classic electronica.

One effect of having a discography that spans five decades is that the music varies to an extreme degree. Their early material is rigid, with an almost classical approach to using synthesizers. Everything builds without resolving. This was electronic music before The Drop was invented. But Kraftwerk are necessarily more important than they are fun, which I mean as a compliment. Seeing where the structures and traditions originated helps you understand why what came after sounded so liberating. Their newer material has more swing, more layers and polyrhythms. I think Computer Love and the stuff from that era was my favorite of the night. As Brian said, it was a once-in-a-lifetime experience to see these OGs in action, and when I listened to Daft Punk’s Discovery on the way home I heard it differently.

The week was also marked by a dentist appointment I’d been dreading for awhile. It was just to get a filling done, but I was told there’d be an injection and drilling involved. The visit was a rollercoaster: it started with an x-ray and the suggestion that the cavity might be in a difficult to reach location, and ended with a closer inspection (in which an injection and drilling were sadly involved) that found… no apparent cavity after all. The tooth has now been sealed with some material that will surely leak microplastics into my mouth, and we’ll monitor it over future x-rays to ensure there wasn’t really anything going on in there. Fingers crossed.

In other sad news, Amazon Singapore has decided to sunset their Amazon Fresh grocery delivery service. It’s not my main source, but I appreciated their “free” (with Prime) next-day delivery and used it maybe every 4–6 weeks. Lately, they’ve been a primary source for sardines, pasta, and ice cream, if you wanted to know how balanced my diet is. The evil multinational corporation giveth and taketh away.

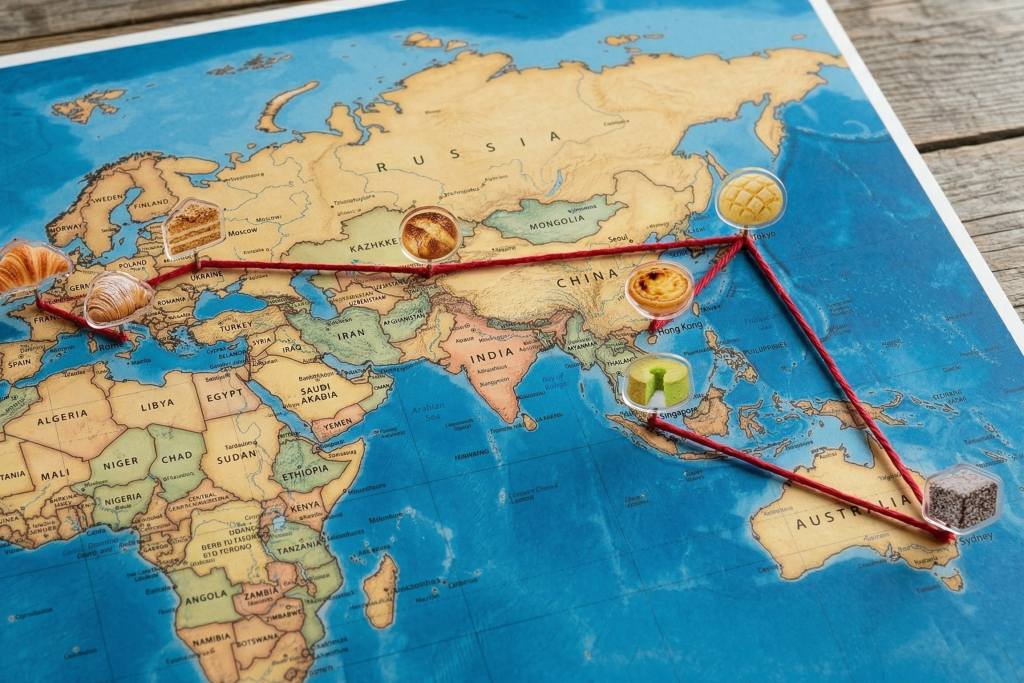

I’ll still keep subscribing to Prime though, because it’s letting me do terribly wasteful things like see English language editions of Brutus magazine while in a Tokyo bookstore last week, decide that I don’t want to carry them around all day and get creased, and so order them online for delivery to my home a week later — for virtually the same price. High off the Snoopy Museum visit, I also ordered these two big, lovely Made-in-Japan mugs that will be my daily tea delivery vessels.

Kim got me a copy of My Beautiful Dark Twisted Fantasy on vinyl for my birthday but it’s only just arrived. I have yet to play it, but the artifact is heavy, substantial, important. It’s no exaggeration to call it one of the best albums of all time, and I think it’s consistently raised my goosebumps for the last 15 years.

Peishan and James also got me a couple of records, and one of them was a Record Store Day ‘preview’ of two tracks from some upcoming John Coltrane releases that were not on my radar. The Tiberi Tapes are a legendary collection of secretly recorded live sessions of Coltrane in the 1960s, made by saxophonist Frank Tiberi. The recordings were imperfect, but new digital technology has made them fit for release, and Impulse Records is set to unleash a bunch of them soon (it’s Coltrane’s centennial year).

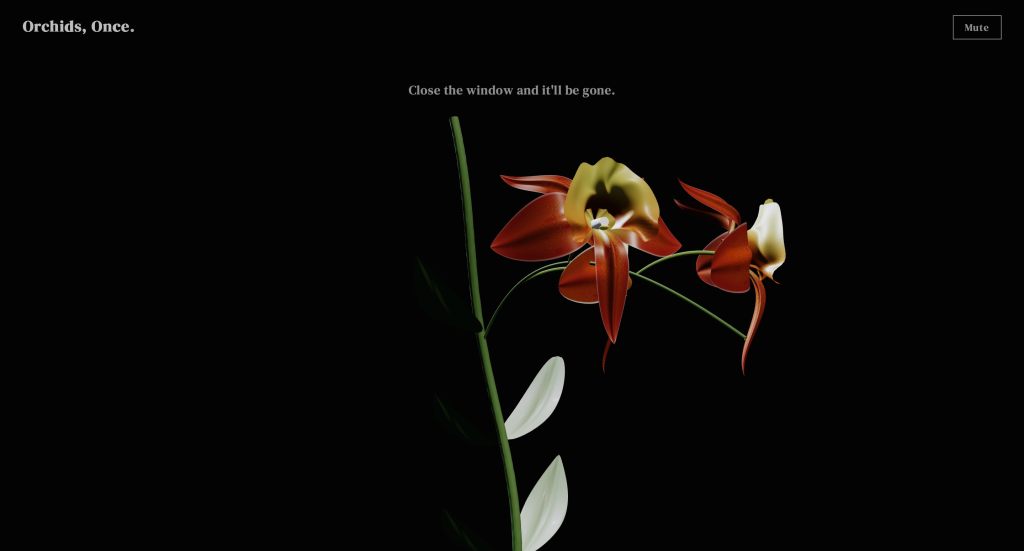

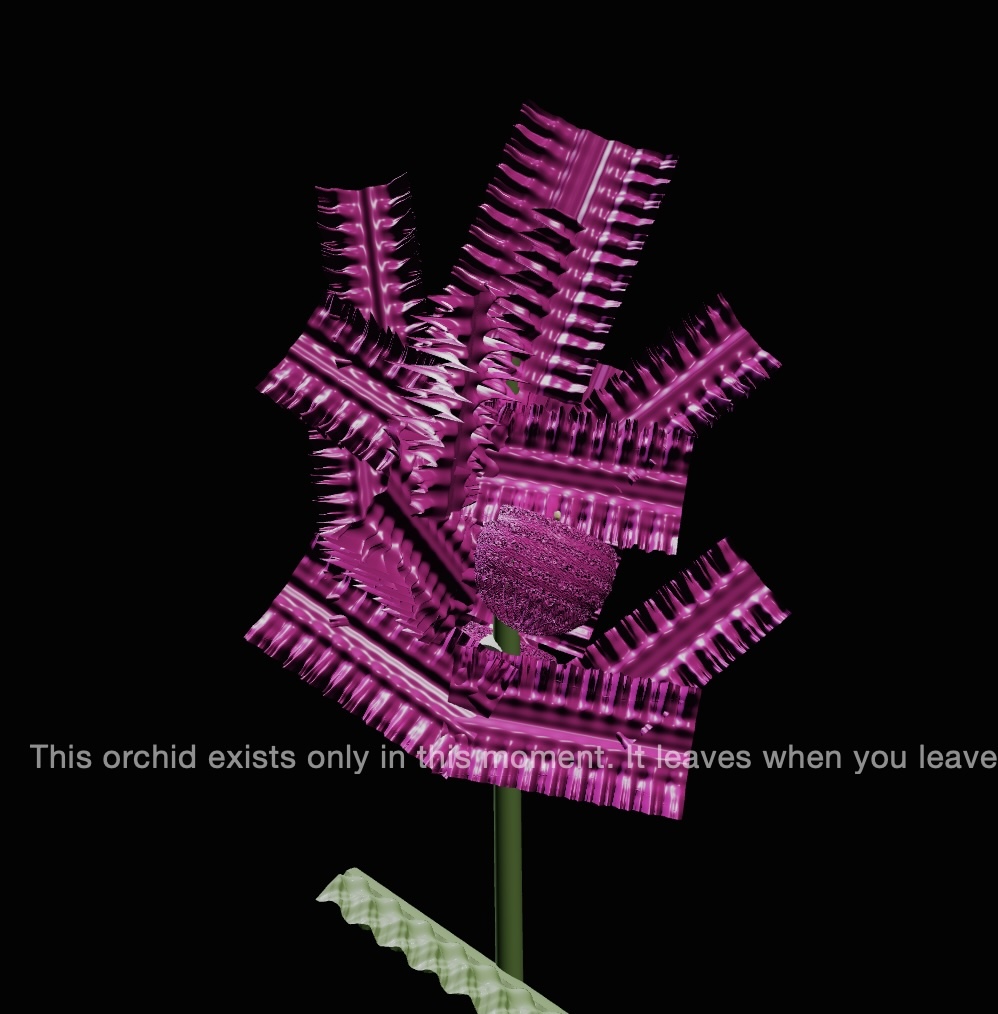

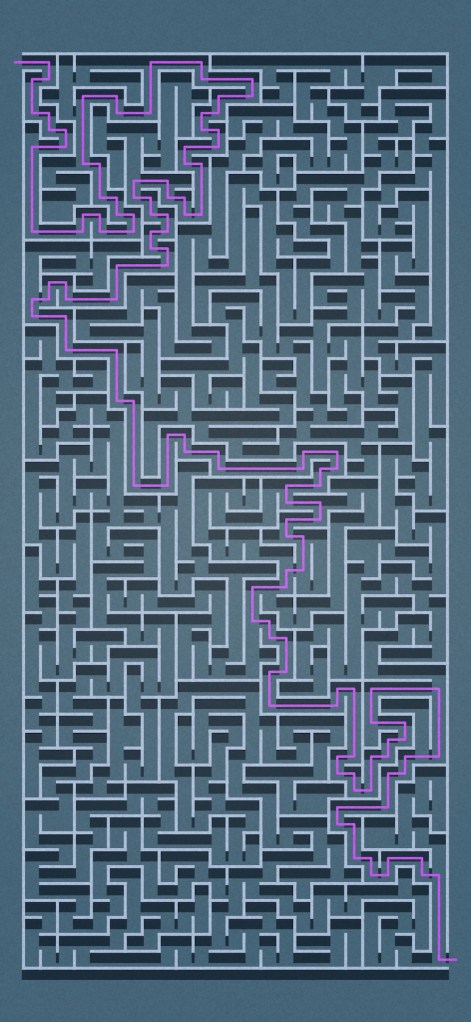

A few weeks ago, I released Orchids, Once. and several people independently told me that the procedurally generated music was good for having on in the background while they worked. That gave me the idea to make something designed to sit in a browser window on a second screen (or in the background) keeping you company throughout the work day with music and visuals.

My first idea turned out to be too ambitious — way beyond my current abilities in terms of graphics and animation. I got a prototype working but it wasn’t worth going further. So I pivoted to a new idea yet again leveraging the orchid models I’d already made to get started quickly.

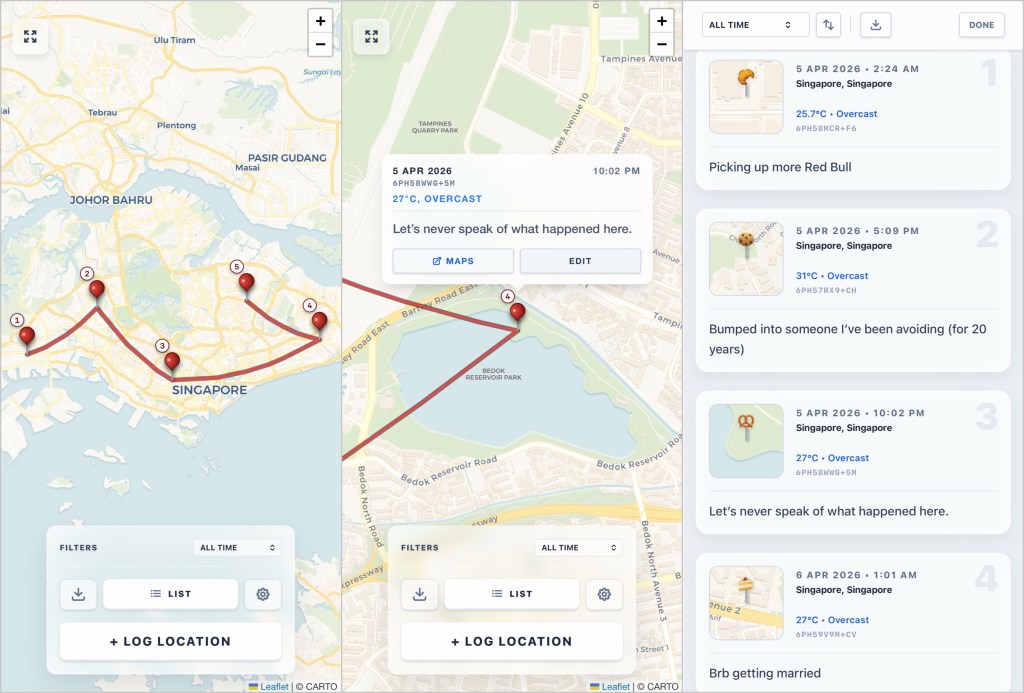

Window Box is the result. It simulates looking out the window of an apartment, seeing a planter box of flowers set outside the windowsill. I’ve never actually seen one of these in real life; I think I first encountered them on Sesame Street as a kid and thought they were cool.

You can currently choose to be in Singapore or Honolulu. There’s dynamic real-time weather and lighting pulled from the Open-Meteo API, to reflect current conditions in either location. There’s an incredibly beautiful (if I do say so myself) rain animation system, along with environmental sounds. I also came up with a neat blending technique to transform the photographic backgrounds to reflect time of day and weather.

Instead of doing more procedurally generated music, I decided people would want real music, so there’s a radio tuner with a handful of curated stations. That includes Apple Music Radio just because I think more people should listen to their shows! There’s also a great Hawaiian station, KAPA-FM, which is a treat when you’re using the Honolulu location.

And just for you readers of the regular blog, here’s a hidden feature: click the app title in the top left 20 times and it’ll unlock bird sounds to complete the scene.

Media activity

- We watched Season 2 of Beef on Netflix. I was primarily excited for the casting of Carey Mulligan, Cailee Spaeny, and Oscar Isaac, but wasn’t keen to see more of the same petty adversarial conflict from the first season. Well, be careful what you wish for — my chief complaint is that it has so little connection to the first season and the concept of beefing, that I think it should just have been a different show. This one raises the class warfare stakes tremendously, goes much darker, and then ends in a tonally unexpected way. Maybe the best Netflix Original in awhile.

- I’ve been playing more Path of Mystery: A Brush with Death, the new Japanese murder mystery adventure game on Switch that I mentioned back in January. It’s an above average game for the genre, and I’d readily recommend it. The chapters are structured and presented like television episodes, which makes it perfect for playing in a couple of short sessions. Each one opens and ends with (skippable) animated credits, and there’s a short “next time on…” video afterwards to give you a preview of the following episode. I haven’t seen this done before, and it adds to the enjoyment of the story that is both interesting and occasionally funny.

- Speaking of episodic anime, I got back into Frieren to try and finish the first season now that a second season is out. Previous episodes were pretty easy to space out across large spans of time, but the final arc with the First Mage exams is surprisingly addictive and bingable. I watched the last 11 episodes in 24 hours. I’m not one for fantasy settings but Frieren is brilliant — especially how it explores the perspective that comes with a longer lifespan and outliving all your friends.