By the time this goes live, I should be in Tokyo. We picked this week to go because Kim thought that there would be a lull at work. That did not turn out to be true, nor is it particularly good timing by any measure: it will be Japan’s Golden Week holidays, a notoriously busy and crowded domestic tourism season, plus there was just a massive 7.7 magnitude earthquake off the northeast coast this week. I believe the Japanglish phrase would be Ohwellganai.

24 hours until take off and I still haven’t packed a single item. That’s either a sign I’m becoming a seasoned traveler (not likely) or that I’m taking this trip more casually than usual. Maybe it’s the fact that the weather is pretty mild and won’t require a different wardrobe than what I usually wear. I hope Tokyo is ready for my basic-ass black t-shirt and baggy jeans look. Compared to last year’s month-long stay, stopping by for a week this time feels really breezy.

I’ll often obsess over what camera to bring on a trip, but this time the decision is much easier. For one thing my top pick, the Ricoh GR III, has decided to completely lock up, physically. All its critical buttons are stuck and gummed up either with dust or crystallized substances — not for the first time, but worse than ever. This doesn’t happen to any other line of camera I’ve owned. The GRs are brilliant little things but their build quality and reliability has sadly been a weak spot.

Secondly, the cameras in the iPhone 17 series are the best they’ve been in years. I’m okay just shooting with the native app in HEIC and editing photos with its “next-generation Photographic Styles”. Or I could shoot ProRAW and edit them in Halide Mk3 too, but it’s mostly extra work and not essential like it was a couple of years ago when Apple’s Smart HDR lost the plot.

This week was also a birthday week so there was altogether too much eating and that’s never a great idea before a holiday where you’re already destined to put on a few kilos. This week has involved too many curry puffs, pizzas, roast lamb, pastas, and patés. I didn’t buy myself anything more than a 10th anniversary copy of To Pimp A Butterfly on vinyl. I decided that since I’m managing to get a lot done with my M1 MacBook Air, upgrading to an M5 isn’t something that would really excite me at all. Making the most of this five-year-old machine is more satisfying, so I could conceivably wait for the M6 model.

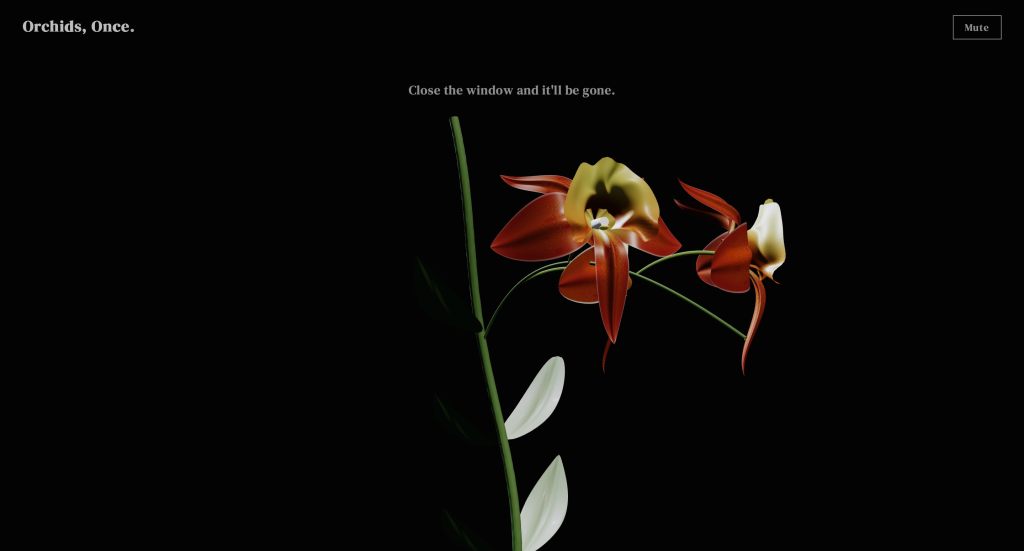

This week I once again repurposed existing parts to make more new things. Last week’s work on the orchids was too intricate to use only once (pun unintended). So I ported the math to my procedural artwork generator to create a new style called Orchids Forever, where I can stage them with different lighting conditions and make wallpapers.

Because Cien said she enjoyed having the music from Orchids, Once. in the background as she worked, I started to think about making a thing that was designed to sit in the background of a workday. The first idea that came to mind was sadly too complex for me to pull off (for now), so I started on another that places a few orchids in a flower box outside a window, looking out over the Singapore skyline. The idea is that it lets people anywhere pretend they’re in Singapore, looking out over a scene that changes with the time of day and actual weather.

The day after I made it, my ex-colleague Tobi over in Germany said he misses Singapore, so I sent this over to help. Rather than reuse the procedurally generated music from Orchids, Once., which would be completely stripping that work for parts, I integrated a free Apple Music Radio player, which makes me happy because more people should hear their live stations.

While reflecting on all this, I’ve started to think there are three camps of people making things with AI. The first, like me, wants to design experiences and outsource the coding. The second wants to code and outsource the design. The third just wants to see things made and don’t care much about either.

This is an enthusiast market, and people are even buying curated Markdown prompt files that promise to enforce design and/or development “best practices,” trying to compensate for not knowing what good looks like. But I’m still skeptical that the general public will want to generate their own custom apps. Most people might create a widget or two to solve a personal problem, but that’s it.

The real unlock for wider consumer vibe coding will be raising the quality of AI-generated UX design. Nobody scrutinizes generated code, but bad design can be felt instantly. Better design defaults might increase the numbers in camps two and three: the people who just want a thing made and don’t particularly have an idea how it should look or work, but would still notice if it was ugly or confusing.

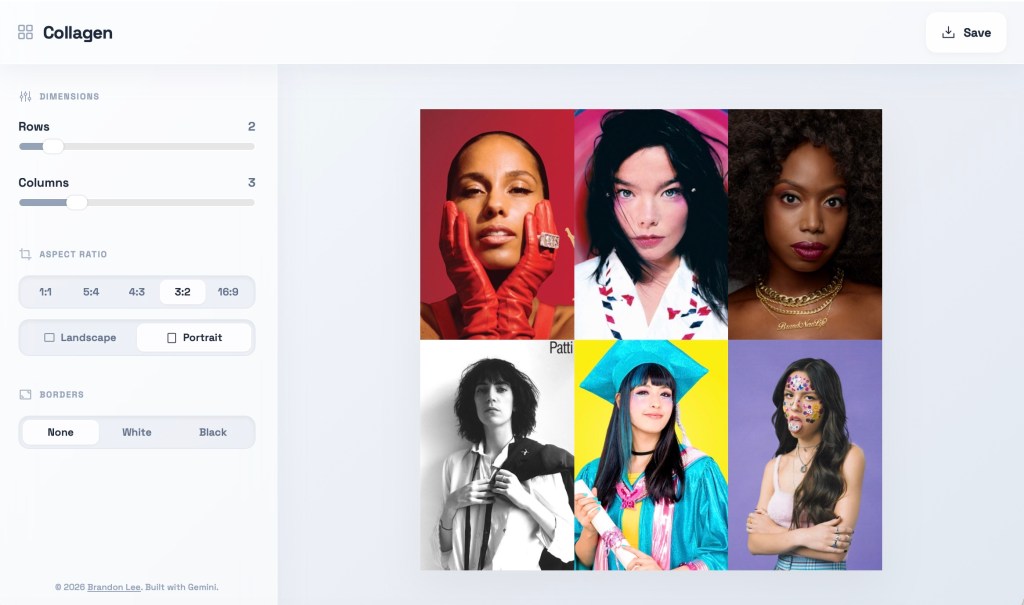

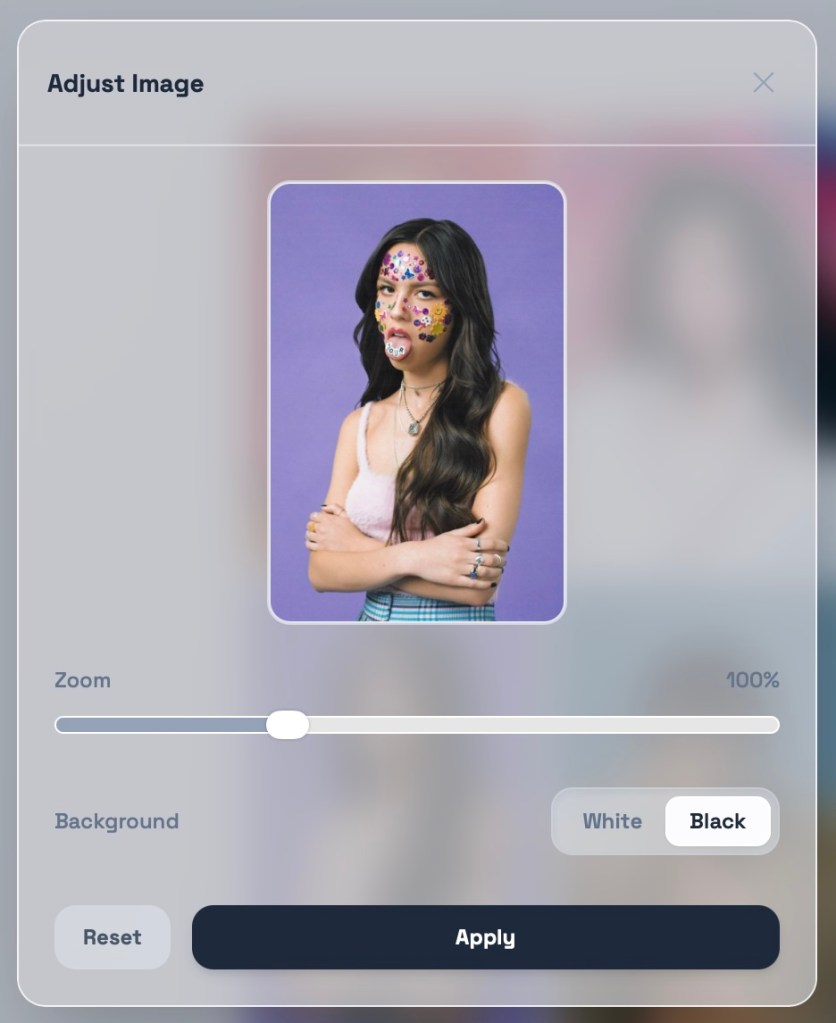

Claude Design, released this week, might be a trojan horse for exactly this. Although seemingly positioned as the anti-Claude Code, with a focus on front-end design and visual prototyping rather than coding (making it a tool for the first camp), it’s still going to make design more accessible for all makers, even the code-oriented ones. It’s worth noting Figma’s stock fell 7% after the announcement.

The secondary effect — already playing out in layoffs I keep hearing about — is a devaluation of designers for common production tasks. This drum is being banged by every dimwit on LinkedIn so you know it’s well underway. Most designers will have needed to start burrowing deeper into their organizations yesterday, into strategy and human-centered decision making roles. Service and business designers should have had a head start, but this is a game of musical chairs and someone’s taking out half the chairs.

- I watched the Sphere (1998) movie with my book club and while I expected it to be possibly racist or sexist, I didn’t think it would be as offensive as it was. It’s godawful. I didn’t hesitate to give it 1 star on Letterboxd. There must be an interesting story behind how Barry Levinson came to direct an undersea horror film based on a sci-fi hit novel by Michael Crichton, starring Dustin Hoffman and Sharon Stone among others, and have it come out so unwatchable and incoherent. The effects, both practical and computer generated, are laughable. And this was just a year before The Matrix.

- We finished Company Retreat, the new hidden camera show from the makers of Jury Duty. The premise is that a normal person is chosen to temp at a company that’s going on their annual team-building retreat, except everyone else is an actor. They put him through absurd situations that test his character, and like in the first show, the mark turns out to be an unbelievably good human being. The scale of the con is much larger this time, and the behind the scenes content is as interesting as the main story (if not more so). I think they went just a little too far with some of the characters this time, to the point where you think he must have known this wasn’t normal.

- I’m currently reading another goddamned Japanese cozy novel, except this one seems to be worth the paper it’s printed on. Letters from the Ginza Shihodo Stationery Shop seemed like an appropriate choice given that district is where we’ll be staying. Like some of these other trash tomes, it’s a bunch of intersecting short stories centered around a titular shop. This time, the stories are actually kinda interesting and have emotional cores that work — stories of everyday people trying to write letters to resolve personal issues. Rob asked if it was appropriate for his 12-year-old (that’s about the reading grade for these books), and I said yes, as long as you can explain the concept of a hostess club to him.

- I’ve also begun reading Oliver Burkeman’s Four Thousand Weeks, a book that appeared on my radar awhile back but whose apparent premise — life is only 4,000 weeks long, so what are you going to do about it? — scared me off. Then Ted mentioned it when we met up a couple of weeks ago and thought that I’d find its concepts familiar, and in line with how I’m already living. I took that as a tremendous compliment and permission to get started. I read the intro and first chapter on the plane, and they deal with the idea that you should embrace that life has time limits, and accept you’ll never be able to do everything. Not only is that okay, it’s how all people lived before our clock-watching, productivity-obsessed era. I couldn’t help but wonder if I was taking away the wrong conclusions, though, because when I think about how short life is, I think of how Whose Line Is It Anyway? is played. You may recall it’s the show “where everything is made up and the points don’t matter”.

- It’s Sunday night in Tokyo and I’m in bed rewatching Lost In Translation (2003) on local TV.