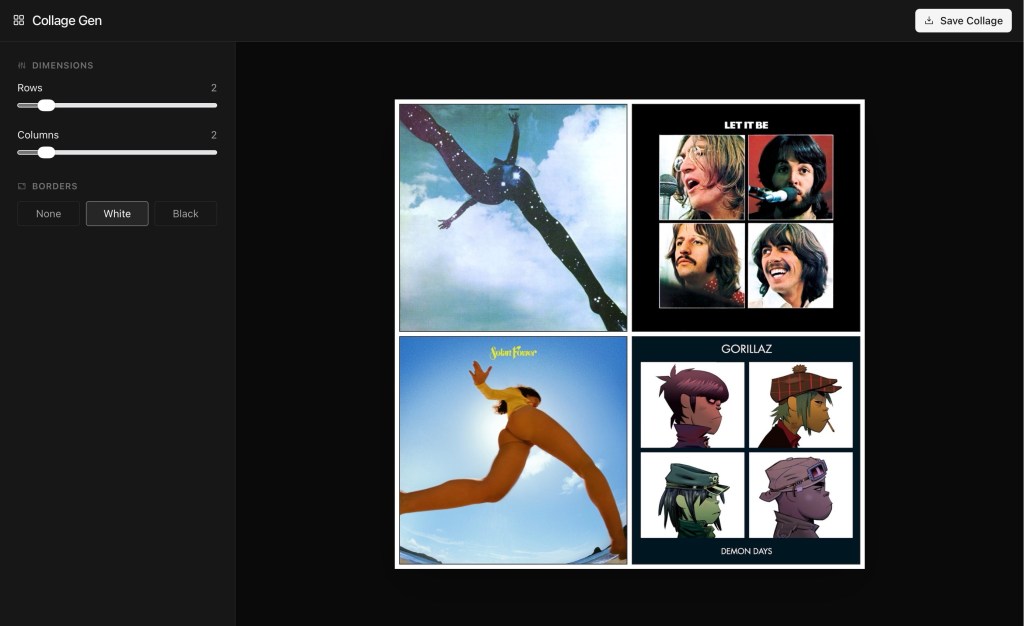

Last week I got started vibe coding with Gemini 3 Pro and was happy enough with the collage-making app I made that I deployed it to Netlify and posted a separate writeup for it here on this site. I also decided to rename it to Collagen, as in Collage-Generator, thanks to a suggestion from Michael.

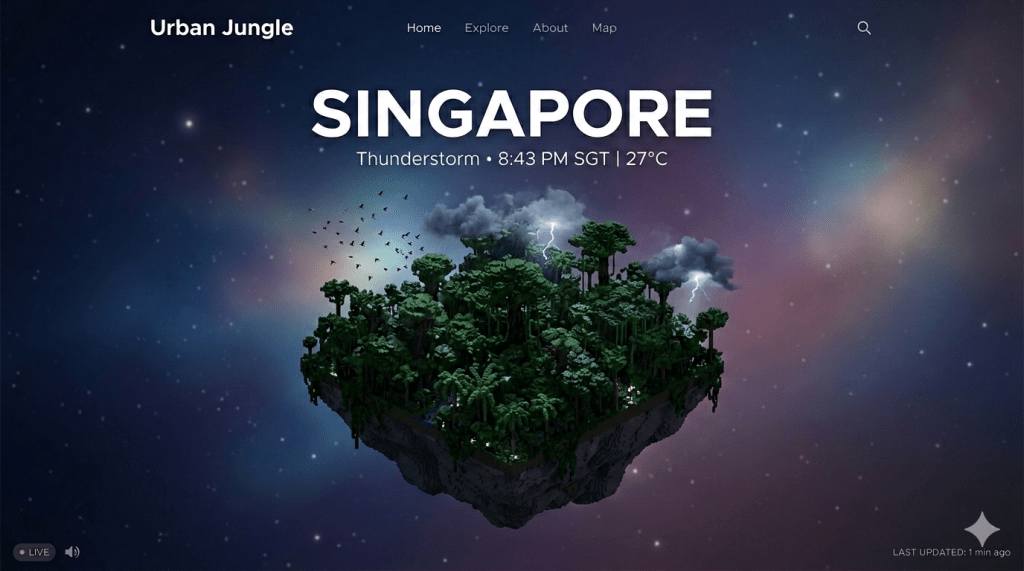

For my second project, I wanted to go much further and test the LLM’s ability to code up something more complex, with real-time 3D modeling and rendering. But what to make? One shower later* and I had a concept I was excited to try out: An app called Urban Jungle that would be a weather visualizer, depicting a world where humans have disappeared and our cities have been reclaimed by nature.

I could see it clearly in my head, and had the idea (in retrospect, a brilliant one that you should absolutely steal) that vibe coding projects should start just like real ones — with concept art. Taking the time to visualize what you want is the first test of whether it deserves to be built. It aligns the team behind a single vision, with fewer chances for miscommunication and wasted time.

I prompted Nano Banana 2 to generate a screenshot of Urban Jungle as if it were a finished product, describing it exactly how I wanted. The result was astoundingly close to what I’d imagined. With this visual in hand, I was able to brief the coding AI that much faster. Sure enough, the first prototype it spat out nailed the isometric view angle, UI, and core functionality.

That it could achieve a pseudo-3D effect with CSS and standard web technologies, writing the whole thing in a minute, was already blowing my mind. But like any difficult client, I thought “why not ask for more and see how far I can push my luck?”

The next version (v1.0) was a total rewrite of the graphics engine, now in full 3D using three.js. Each city is procedurally generated to be unique, with different forest/jungle topographies depending on the region. The increased detail meant I could add decaying buildings, pylons, and roads. When you tap on the trees, flocks of birds scatter. When it gets cold, the vegetation dies, and below 0ºC the ground becomes covered in ice and the birds disappear. I thought… ‘this is great! I think we’re done!’

But I should have known projects like this are never done. Next came v2.0 which rewrote the architecture to allow it to act like a proper weather app. You can now simulate the weather for any city over the next 24 hours, scrubbing through time with a slider. As you do, the lighting and climate effects change dynamically. It generates live sound effects for wind, rain, birds, and thunder. You can pan and zoom around the model with your fingers. There are now drifting clouds and proper lightning that strikes the earth during storms.

Comparing the concept art with the ‘finished’ product, and I’d say I got as close as one could hope with a web app contained in a single HTML file. Here’s a standalone post about the app, which brings me to another advantage of vibe coding with an LLM: these things write their own “App Store”-like product copy!

Try Urban Jungles at urbanjungles.netlify.app

Edit: I couldn’t leave it alone and after writing the above, made so many changes I had to implement a version history link on the front page. Now in v3.1, there’s an animated starfield in the night sky, and a freakin’ VR mode for Apple Vision Pro! It uses WebXR to place you inside a 3D environment with the city model floating in front of you. And after a couple of people suggested I add iconic landmarks like the Eiffel Tower, I decided that we could have a few as Easter eggs in major cities. Customizing every city to get full landmarks coverage of the world would be too much, even for me. But err check back next week, you never know.

It strikes me that generative AI vibe coding is modern day Lego. It lets kids and adults alike build silly (or serious) things straight from their imaginations. It’s extremely fun and educational to express yourself in this way, if you just look at it as an advanced toy. The difference is that no one is using Lego to build a working car, or furniture, or anything that can be exchanged for money. But LLMs are already used in the building of most commercial software, and the proportion of their contributions is only going to grow.

But as a hobbyist with little coding experience, I’m afraid about how desensitizing this new ability can be, and how it will dull our ability to wait for good things. It will heighten our time preference, in other words. While it was downright exhilarating to see my idea come to life in minutes instead of weeks, or never, I know it has already rewired my brain. I expect this now. The next app I make will be judged more harshly — they all will, now that I know how “easy” this is. Patience is going to be impossible, and that’s bad for everyone.

*But what was that asterisk up there with the shower thought? It occurred to me later that maybe I came up with Urban Jungle because of The Wall which I read last week. To reiterate, it’s a survival story that takes place after an Event seemingly decimates all of humanity save for our female protagonist. She lives in a lodge in the Austrian Alps, getting by on limited matches, ammo, and medications. She’s constantly battered by storms and weather conditions, fighting a slow, losing battle against nature. That imagery must have stuck in my head.

After that recent aggressive reading spell, I slowed down and decided to chill with one of those cozy Japanese books that are still so popular — you know the ones, set in convenience stores, or bookstores, or cafes, or the backseats of taxis, where absolutely nothing important happens apart from a mild mental breakdown brought on by social anxiety and ennui, aka living in Japanese society. I’ve semi-enjoyed a few of these before, most notably Michiko Aoyama’s What You Are Looking for Is in the Library.

But even those lowered expectations could not have prepared me for the absolute waste of paper/pixels that is Atsuhiro Yoshida’s Goodnight, Tokyo (translated by Haydn Trowell and published by Europa Editions). I mention all involved parties because the blame for this should be shared. Multiple people started work on this, knew what they had, and decided to keep going. I can only guess the motivating factor was profit and cashing in on this cozy Japanese book trend. I hope it was worth it.

I am now reading Olga Tokarczuk’s Drive Your Plow Over the Bones of the Dead (translated by Antonia Lloyd-Jones), and it is soooo much more deserving of your time. Then again, she’s a Nobel laureate — perhaps not a fair fight. But that’s the thing about books versus things like Michelin restaurants: the good ones cost about the same.

I’ll leave you with some non-AI photos I took on a walk yesterday as a palate cleanser.