Christmas is creeping closer, but the Goodreads Challenge angel won’t be darkening my doorstep as I’ve redeemed myself with two weeks to go! James Hogan’s Thrice Upon A Time was the twelfth book of my year, and definitely one of the better ones. It’s a 1980s time travel story where no time travel takes place, but it grapples with ideas about how timelines are rewritten, plus some other global topics that seem quite prescient when read today. Stylistically, it’s aged, but in that classic sci-fi way I love, which takes me back to reading books in the library after school. I think those hours, that precious access back then to a ton of books I couldn’t wait to read, were the part of going to school I looked forward to most. Anyway I’ve started a dumb new book that I should be finishing this year for bonus credit: The Paris Apartment by Lucy Foley.

If you’re looking for reading material, it may interest you to hear that I somehow managed to finish B’Fast, the AI-generated breakfast zine project I mentioned last week. The InstaZine GPT I made to create the content is also available through the same link (I updated the page with some additional usage tips today). Now that it’s done, I’m planning to make a companion breakfast-themed zine called “B’Fast (Brandon’s Version)” which will be made entirely by me, in a way that an AI presumably would not. But probably not straight away.

===

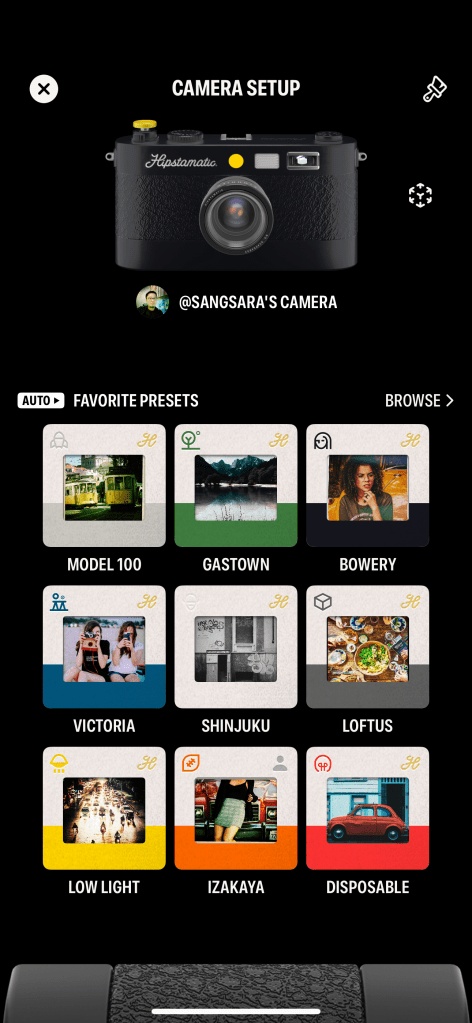

Earlier this year, Hipstamatic redesigned and relaunched their Hipstamatic X app. The “X” was dropped, and they added a new social feed. It was the official replacement for their original app which became Hipstamatic Classic. Where the original was funded by in-app purchases for new filters (at a pace of roughly one new 99c release each month), the new Hipstamatic charges a $30/year subscription, doubling their income from faithful fans.

I used the new app for some photos during my trip to Japan and mostly enjoyed the experience, but it was too buggy and the UI was still too cluttered and confusing (a longstanding problem with the original Hipstamatic app as well) for me to consider continuing with a paid subscription.

Their main problem is that there are now over 300 filters in the forms of “films” and “lenses” and “flashes” that you can combine to make infinite looks, and no good way to make the attractive human-curated combinations they recommend accessible and discoverable. In the last version, they tried to give a few of these combinations the tangible metaphor of being unique “cameras”, each one with a different skeuomorphic body you picked off a shelf, but essentially they were presets you could call up. But you can keep, what, ten of these aside in a little drawer before you couldn’t tell them apart? And so many of the other combinations were left out of sight and out of mind.

Now, after a week of teasing social media posts, wherein a “physical camera” was shown in videos — quite obviously a 3D model rendered in AR, but some people believed they were going to release a hardware product anyway — they’ve released a major update (v10) that tries to untangle the Gordian knot of their UX issues.

In this new version, they’ve tried to marry what worked in the original app with a new info architecture and set of metaphors to manage the library of looks they’ve accumulated over the last 14 years. You get just ONE skeuomorphic camera to call your own and customize the look of, and this camera is capable of loading up many presets. You can either let the camera detect the scene and choose a suitable preset for it (Auto mode), or specify the preset yourself (Manual mode). There are 9 possible scenes, such as Travel, People, and Still Life, but in a puzzling and unfortunate move, when you start using the app, each of these scenes has just one or two associated presets. That means you’re going to see the same looks over and over, when there are over a hundred more hidden away in a long list. This was presumably done to allow you, the user, to customize your experience and assign your favorite presets to the scenes.

There are two major problems at this point. One: leaving it up to the user to gain their own understanding of all the pre-existing “good combos” and assign them to 9 scene categories is insane. It’s a lot of work to hand off to a customer you hope will pay you money. The team should be doing the work of tagging each preset combo with a recommended use case, AND making it easy to assign them. It’s not currently easy. I had to move back and forth between two sections of the app looking at presets and memorizing their names to go assign to a scene, because these things aren’t placed together. Off the top of my head, it just needs an in-line list of suggested presets (from the aforementioned tagging exercise) on the same screen where you customize a scene’s presets. Perhaps this is coming. I’d argue it should have been in the MVP release of such a big redesign.

Two: as I mentioned, there are infinite possible presets given the number of ingredients they’ve accumulated. You can make your own combos, but there’s no great way to experiment and do this — there should be a sandbox where you can explore each lens/film/flash’s characteristics and try them out in real time to find a good combo. There used to be a section of the app called the “Hipstamatic Supply Catalog” where you could browse all these effects (it was only like a static magazine, but they could have made it interactive), and this now seems to be gone or I can’t find it anymore in the maze of menus and buttons. Perhaps they’re okay with most users just using the curated “good presets” and never making their own, but it seems like a missed opportunity.

I was feeling a mix of optimistic and bored, so I paid for an annual subscription anyway and will be trying to take lots of everyday silly snaps with this, and maybe even use it on my upcoming trip to Thailand. But if you know someone who works at Hipstamatic, please talk to them about taking on some external advice.

===

- I finished watching Pluto on Netflix. It’s still a strong recommendation for me; a modern anime made with classic sensibilities and a story that really keeps you guessing. It’s also a very different Astro Boy story, suitable for people who hear “Astro Boy” and think it’s stuff for kids.

- We started watching A Murder at the End of the World and I’m really liking it so far. Especially its star, Emma Corrin, who I’ve never seen in anything else before. They’ve got the most strikingly similar face to Jodie Foster, I was sure they were related.

- New playlist! BLixTape #3 is done, made up of mostly new songs that I’ve been listening to since mid-October. Add it here on Apple Music.