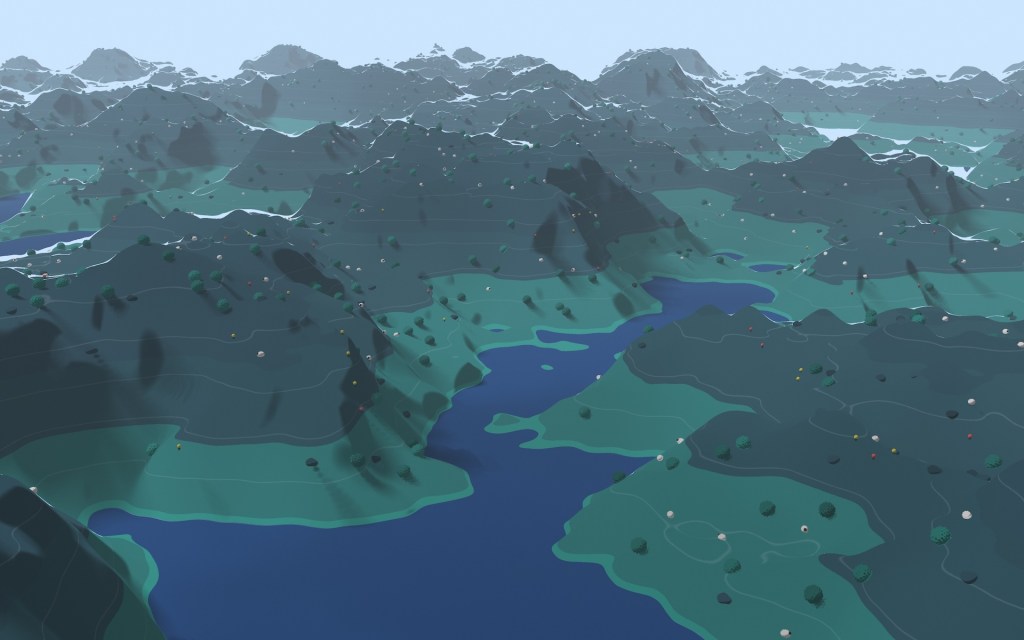

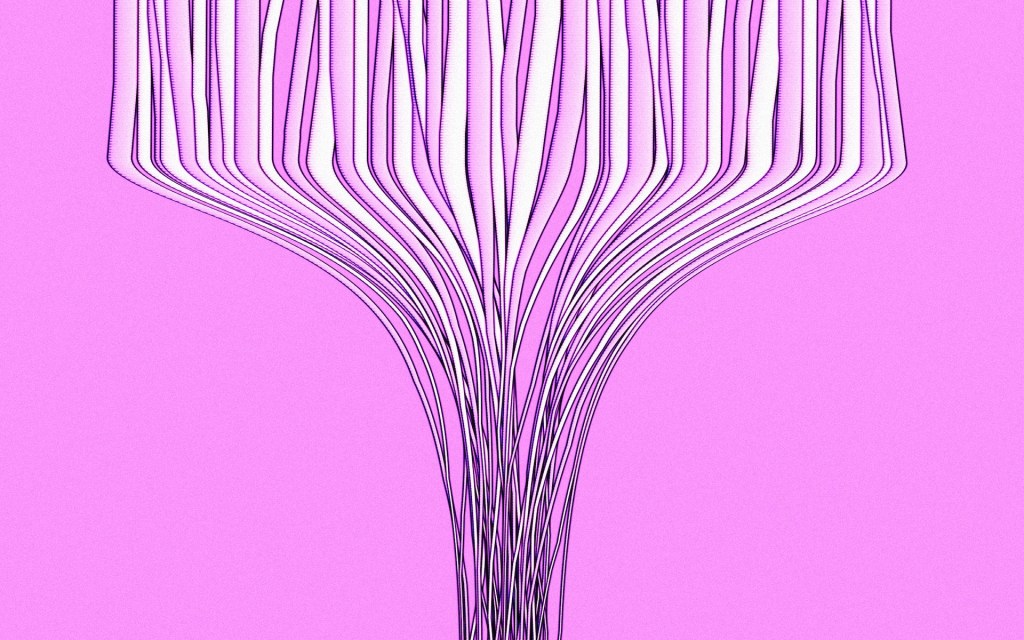

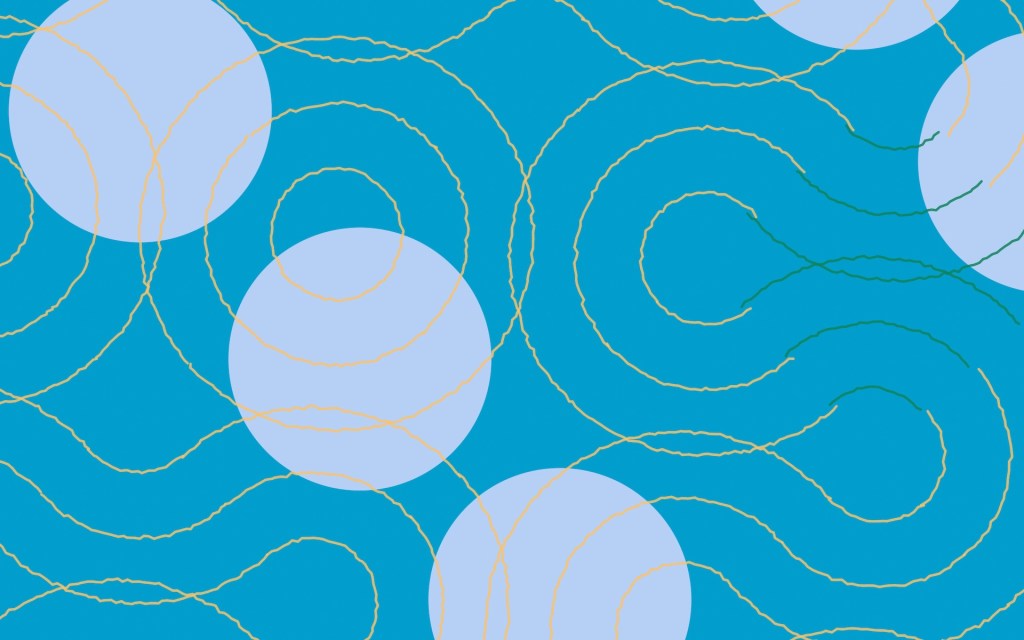

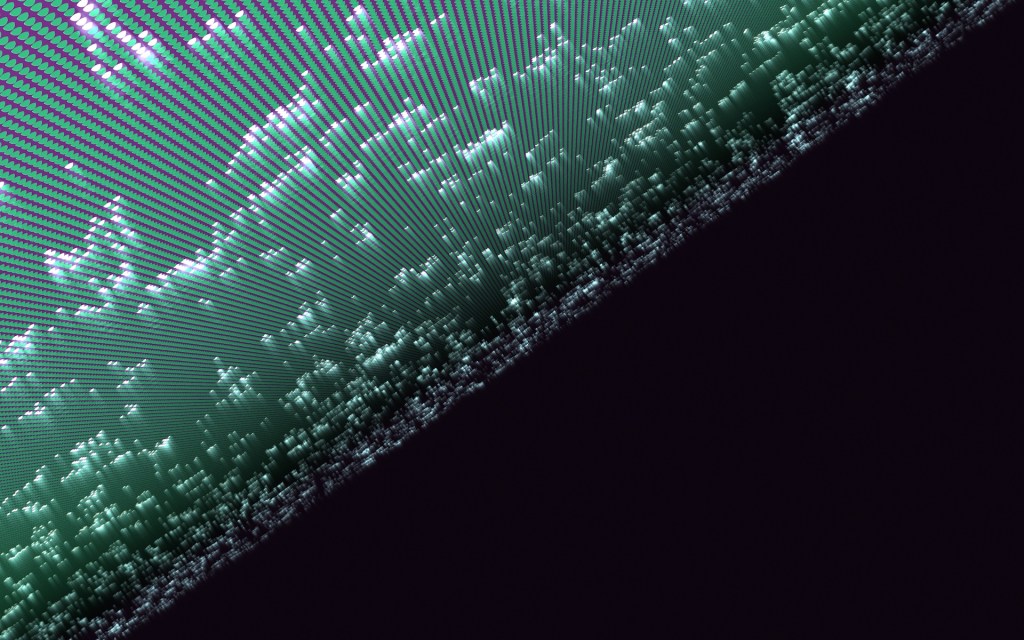

I’m looking through my camera roll to remember what happened this week and it’s mostly a bunch of “artworks” I’ve been making. Wait, let me step back: I’ve had an interest in procedurally generated graphics (GenArt) for awhile, and it peaked with the NFT boom of 2021–22, where I spent a relatively obscene amount of money minting and collecting artworks I really liked (not the monkeys). I’m mostly drawn to the idea of mathematically rigid routines producing organic beauty — the contrasts in that, and the unpredictability of what you get when you roll the RNG dice.

So after my recent experiments in making apps, I wondered if I could get AI to write me code that would generate images based on concepts I described. The answer is, of course, yes! It’s important to note this isn’t prompting for images (like when you use Midjourney or DALL-E), it’s prompting for the math behind making images. And once you’ve created the rules by which it draws different art styles, you can create a nearly infinite number of unique artworks by dialing different variables up and down.

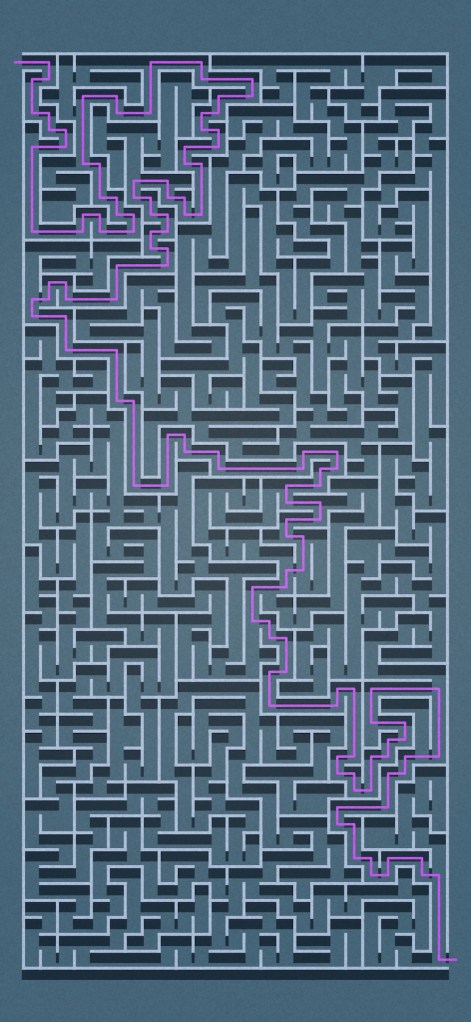

One example is a “style” I made called Labyrinth, which produces actual, solvable mazes. Depending on the variables you adjust, you can make mazes ranging from tiny to massive, with just one solution, or many. If you asked an image generation AI to draw a maze, it would likely lack the coherence of a real maze, because of the way it operates — focusing on the superficial appearance and not the integrity of its paths. But an AI model can make the math to draw a maze.

I start most of these by thinking up an artistic production approach, say “take sheets of colored cardboard or acrylic, and punch holes of varying shapes into them, then layer them on top of each other so the holes line up (or not), and randomly spray contrast-colored paint on some of them”. Then I describe the possible variations and variables I want to control to the AI, such as the density of shapes, the thickness of the borders, the ratio between angular and organic lines, and we iterate after seeing some of the results. Just think of all the methods and ideas you might want to play with, and how this lets any old idiot model them on their computers!

The meta project is that I’ve made a modular app that handles all these different styles for me, whether they require a 2D canvas or WebGL. The app provides a common UI layer that all “styles” can plug into, which allows me to control them. Now that it’s done, I can just focus on experimenting and having fun making new artworks. I daresay a few of these are executed as well as any of those I spent money on.

I’ll probably release it as a wallpaper generator once I have enough styles built in, if anyone’s interested. But mostly I love having this as a background project that I can dip into, on and off. It allows me to take on other app ideas as momentary “side quests”.

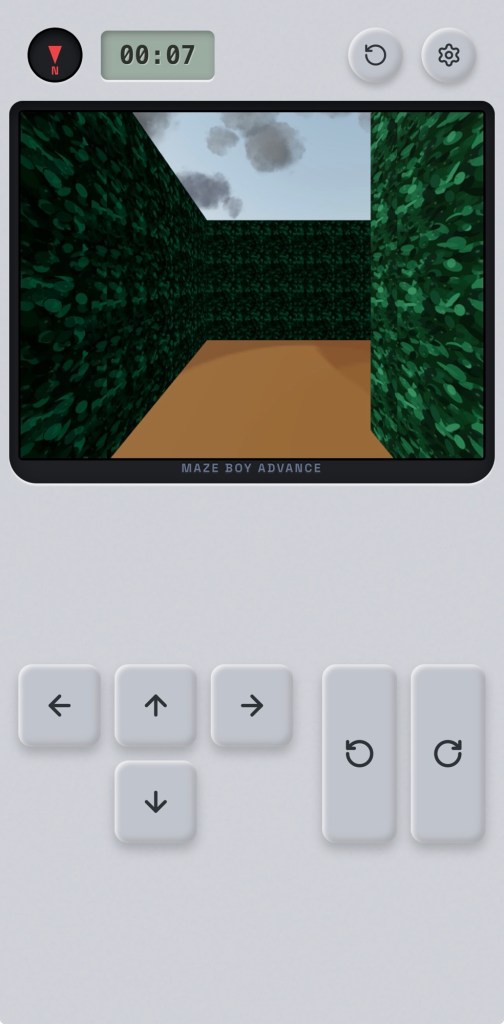

While making Labyrinth, I showed a maze to Cong, who said “You should do a puzzle maker”. To which I said, “Nah.” And then a minute later… “Although, a daily maze game. Hmm.” It made sense that I could save time by taking CommonVerse’s daily random generation mechanic and combining it with Labyrinth’s logic to make a daily maze challenge. But would it even be fun to trace a 2D maze with your finger and try to solve it? No… so what if it was a 3D maze you had to escape?

The first prototype took a couple of hours, and I’ve been polishing it for the last few days. I think it’s coming along nicely. I’ll put it out soon, once I balance the difficulty and get more feedback from testing.

The development of [redacted game name] was hampered by a rare bar crawl with Howard and Jussi on Thursday night that gave me a massive hangover lasting into Friday afternoon. When I got home, I was too plastered to care that my vinyl copy of J Dilla’s Donuts had arrived from Amazon US protected by nothing more than a flimsy paper envelope. By the clear light of day I was amazed that they would even do such a thing. The discs are intact, but the sleeve has a bent corner. If I’d ordered from Amazon Japan, I would bet a major internal organ that it would come wrapped in four layers of stiff cardboard, bubble wrap, and a handwritten apology for their carelessness.

Did I mention we’re going to Japan again? It’ll be a short vacation, in a couple of weeks’ time. Not much on the agenda, just checking in on the state of curry rice and egg sandwiches. Maybe see some nice art. Take some photos.

Which brings me to the latest betas of Halide MkIII, which I’m very much looking forward to using on the trip. They’ve been progressing the app nicely, and it might be enabling the Holy Grail of iPhone photography workflows for me. Ironically it involves using Halide not as a camera app, but just as a photo editor. You can shoot compact (lossy, JPEG-XL compressed) ProRAW photos up to 48mp with the default camera app, then edit them in Halide to have the same look as their Process Zero photos! What this means: you get all the benefits of computational photography at time of capture, including noise reduction and night mode, but you’re also free to dial it back and get natural, “real camera” photos in post if the scene calls for it.

As much as I like these side quests, I think making my own photo editor would be biting off entirely too much to chew, so I’m still rooting for these guys to crack it.

While writing this post, I got the news that an elderly aunt passed away at the age of 93. She had been in reduced health since the Covid years, but by all accounts she went very peacefully and I guess you can’t ask for much more than that after a long life. The extended family’s Chinese New Year routines fell apart in recent years after she pulled back from organizing them, so it was fitting that some of us got to reconnect at her wake on Sunday evening.

See you next week.

Leave a comment